How many times we have calls from users complaining about their process that failed due to an ORA-01555 error?

We know that if the queries are not well tuned and they modify a lot of data, the image held in the UNDO Tablespace could not be consistent with the real data. But have you ever seen this error right away after executing a SQL statement against a table?

I just did couple of days ago. Here’s the story:

ORA-01555 error appeared in the alertlog’s database with a query duration of 0 seconds.

ORA-01555 caused by SQL statement below (SQL ID: d3rt4tyudufeu, Query Duration=0 sec, SCN: 0x034f.34f660b4)

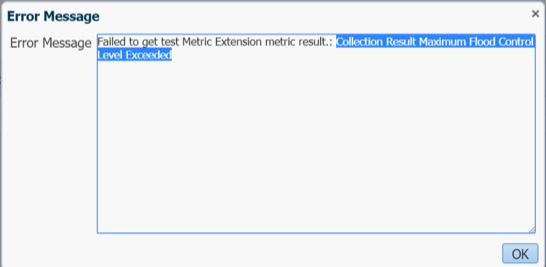

Any queries plus an analyze table failed right away with ORA-01555:

ERROR at line 1: ORA-0155: snapshot too old: rollback segment number 10 with name “SYSSMU11_1072300523734$” too small

So weird.

After researching a bit on MOS, found a note regarding a bug.

Some minutes later we also started to receive ORA-600 errors related so scn numbers.

ORA-error stack (00600[ktbdchk1: bad dscn])

The MOS note mentions the ORA-01555 and the ORA-600 errors as part of bug 22241601 with a Dataguard configuration. Is worth to mention that yes, we were doing switchover testing recently in this 12.1.0.2 environment.

ALERT Bug 22241601 ORA-600 [kdsgrp1] / ORA-1555 / ORA-600 [ktbdchk1: bad dscn] / ORA-600 [2663] due to Invalid Commit SCN in INDEX (Doc ID 1608167.1)

The solution is to apply the patch but there’s also a tested workaround that is to rebuild online all the indexes of that table.

Hope this helps.

Alfredo